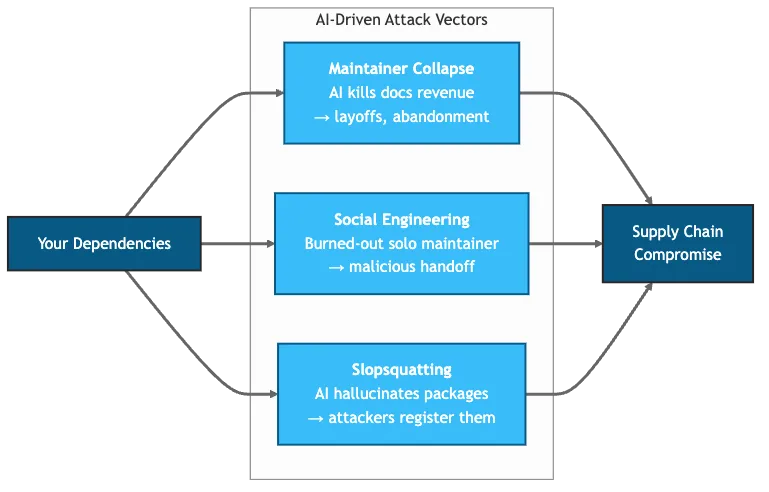

A CI pipeline trusts 400 packages. Last week, one of them laid off 75% of its engineering team. Two years ago, another nearly shipped a backdoor to every major Linux distribution. Attackers are now registering package names that only exist because an AI hallucinated them.

Three incidents. Three attack vectors. One common thread: AI is reshaping software supply chain risk faster than most security programs can adapt.

Most organizations scan for CVEs and maintain an SBOM. Few monitor for maintainer burnout, AI-driven revenue collapse, or packages that only exist because an LLM invented them. The following framework closes those gaps.

Figure 1: Three AI-driven attack vectors targeting a software dependency chain.

Table of contents

Contents

- What Are the Three Attack Vectors?

- Why Does This Matter at Enterprise Scale?

- How Does the Two-Tier Ecosystem Create Different Risks?

- How Should Organizations Assess AI Exposure Risk?

- How Does Vibe Coding Multiply Supply Chain Risk?

- How Can Organizations Defend Against AI Supply Chain Attacks?

- How Will Relicensing Reshape the Stack?

- References

What Are the Three Attack Vectors?

AI does not just accelerate existing supply chain risks. It creates new ones.

Vector 1: Maintainer Collapse (AI-Accelerated)

On January 6, 2026, Adam Wathan attributed Tailwind’s layoffs directly to AI’s brutal impact on their business. Documentation traffic dropped 40%. Revenue collapsed 80%. Yet Tailwind CSS downloads keep climbing.

The mechanism is simple: developers ask Copilot for a Tailwind grid layout. The AI generates it. No documentation visit. No discovery of Tailwind UI. No conversion. The developer gets value. The maintainer gets nothing.

This is not a failing product. It is a failing business model, and not unique to Tailwind. Any project monetizing through documentation traffic faces the same exposure.

Meanwhile, curl maintainer Daniel Stenberg scrapped the project’s bug bounty program on January 21, 2026, citing “intact mental health.” Twenty AI-generated vulnerability reports flooded HackerOne in January alone. None identified actual vulnerabilities. Researchers paste code into LLMs, submit the hallucinated analysis, then loop follow-up questions through the same models. The result: maintainers spend hours triaging garbage instead of shipping code.

Different mechanism, same outcome. Two forces compete: AI lowers the cost of writing software (beneficial for maintainers), but it also diverts users away from the direct engagement that funds maintainers (unsustainable for business models). Koren et al. (2026) model this formally and show the demand-diversion channel dominates. Stack Overflow activity has declined roughly 25% since ChatGPT’s launch, following the same pattern as Tailwind.

Vector 2: Social Engineering of Solo Maintainers

In March 2024, Microsoft engineer Andres Freund discovered a backdoor in XZ Utils days before it would have shipped to most Linux distributions. CVE-2024-3094 scored a perfect 10.0. The backdoor enabled remote code execution through SSH on affected systems.

The attack took two years. A contributor using the name “Jia Tan” gained the sole maintainer’s trust through legitimate contributions, then inserted malicious code. The maintainer was burned out, working alone, grateful for help.

This pattern repeats. The event-stream incident in 2018 followed the same playbook: abandoned maintainer transfers control, attacker inserts cryptocurrency-stealing code. XZ Utils proved the technique works at infrastructure scale.

Two years later, the technique has evolved. Attackers now use LLMs to maintain technically helpful, perfectly patient personas over months, bypassing the “vibe check” that once caught human bad actors.

Vector 3: Slopsquatting (AI-Native)

This attack vector did not exist before LLMs. Slopsquatting exploits models that confidently recommend nonexistent packages. One in five AI suggestions points to a package that was never published (Spracklen et al., 2025).

The attack flow:

- Researchers run popular LLMs and collect hallucinated package names

- Attackers register those names on npm, PyPI, or RubyGems with malicious payloads

- Developers install AI-suggested packages without validation

- Malicious code executes

Unlike typosquatting, attackers do not need to guess which names developers might mistype. The model identifies exactly which fake packages to register. Names like “aws-helper-sdk” and “fastapi-middleware” appear in AI-generated code but never existed until attackers registered them.

The defense is straightforward: verify that packages existed before the commit date. This check catches AI-hallucinated packages that attackers registered after the model suggested them:

import requests

from datetime import datetime

def check_package_age(name: str, commit_date: str) -> bool:

resp = requests.get(f"https://registry.npmjs.org/{name}")

if resp.status_code == 404:

return False # Package does not exist

pkg = resp.json()

published = datetime.fromisoformat(pkg['time']['created'].replace('Z', '+00:00'))

return published < datetime.fromisoformat(commit_date)scripts/validate_deps.pyCode Snippet 1: Detect slopsquatting by checking package age. Conceptual implementation only.

Why Does This Matter at Enterprise Scale?

Sonatype’s 2024 State of the Software Supply Chain report documented 512,847 malicious packages discovered between November 2023 and November 2024. A 156% year-over-year increase. The Verizon 2025 DBIR found that 30% of breaches now involve third-party components, double the previous year.

Log4Shell proved how fast these risks materialize. According to Wiz and EY, 93% of enterprise cloud environments were affected. Four years later, 13% of Log4j downloads from Maven Central still contain the vulnerable version, roughly 40 million vulnerable downloads per year.

These vectors do not operate in isolation. They cascade. The same “software-begets-software” feedback loop that drove OSS growth now amplifies contraction: fewer maintainers produce fewer packages, which reduces ecosystem quality, which further weakens incentives to share. At 70% vibe coding adoption, engagement-based monetization drops roughly 70%, but OSS entry can only sustain an 11% decline before projects start disappearing. That 59-percentage-point gap is the crisis window.

Monitoring the right signals matters more than counting CVEs.

How Does the Two-Tier Ecosystem Create Different Risks?

Not all dependencies face equal exposure. A two-tier structure is emerging.

Tier 1: Foundation-Backed Projects

CNCF, Apache, and Linux Foundation projects operate differently. Kubernetes maintainers are typically employed by member companies. Corporate membership dues fund development. Governance structures distribute responsibility.

These projects face sustainability challenges: burnout, security maintenance burdens, contributor fatigue. Foundation backing buffers against documentation-traffic collapse, but not full immunity. Corporate membership dues often correlate with ecosystem health; if the broader ecosystem contracts, so does corporate willingness to fund.

Tier 2: Indie and VC-Backed Projects

Tailwind, Bun (pre-acquisition), curl, and thousands of smaller projects depend on sponsorships, consulting revenue, or VC runway. Many have single maintainers. The bus factor is often one.

These projects underpin the modern web. They are also most exposed to all three attack vectors.

Most enterprise stacks span both tiers. Kubernetes (Tier 1) might orchestrate containers running applications built with Tailwind (Tier 2). The risk profiles differ, and monitoring should reflect that.

How Should Organizations Assess AI Exposure Risk?

Traditional dependency scanning catches CVEs. It does not catch maintainer burnout, revenue collapse, or AI-hallucinated packages. Additional signals are needed.

The 5-Signal AI Exposure Audit

-

Funding model: Corporate-backed or sponsorship-dependent? Check GitHub Sponsors, Open Collective, or company backing. Sponsorship-dependent projects face higher AI exposure.

-

Contributor count: Bus factor greater than three? XZ Utils had one active contributor. Look at commit history and active contributors over the past 12 months.

-

Governance: Foundation membership or solo maintainer? CNCF and Apache projects have succession plans. Solo projects often do not.

-

AI exposure score: Docs-driven monetization (high exposure) or infrastructure utility (lower exposure)? UI libraries and developer tools face higher risk than compression utilities or parsers.

-

Recent signals: Layoffs, acquisition talks, burnout posts? Monitor project blogs, maintainer social media, and GitHub discussions.

Decision Thresholds

The following framework maps signals to response levels:

| Risk Level | Signals | Action |

|---|---|---|

| Monitor | 1-2 signals, Tier 1 project | Quarterly review |

| Watch | 2-3 signals, any tier | Monthly review, identify alternatives |

| Mitigate | 3+ signals, Tier 2 project | Sponsor, fork, or migrate |

| Critical | Active incidents (layoffs, security events) | Immediate review, contingency plan |

Table 1: Decision thresholds for dependency risk response.

Enterprise Application

Consider a fintech platform with 400 npm dependencies. Traditional scanning surfaces CVEs. The AI exposure audit surfaces different risks:

| Dependency Type | Count | High AI Exposure | Action |

|---|---|---|---|

| UI frameworks | 12 | 4 | Review monetization models |

| Build tools | 8 | 2 | Track bus factor, burnout signals |

| Infrastructure | 45 | 1 | Lower priority |

| Utility libraries | 335 | 23 | Automate monitoring |

Table 2: AI exposure audit across dependency categories.

The 23 high-exposure utility libraries are not all equal. Prioritize by criticality: is it in the authentication path? The payment flow? The deployment pipeline?

How Does Vibe Coding Multiply Supply Chain Risk?

Andrej Karpathy coined “vibe coding” in February 2025: developers who “fully give in to the vibes” and let AI generate entire applications. The practice is accelerating, and it compounds every vector above.

Slopsquatting is the visible risk. The less visible one: license laundering. AI training data includes GPL and AGPL (copyleft) code. When developers accept AI-generated output without review, they risk shipping restrictive-licensed code as proprietary. The provenance is untraceable. Tools like FOSSA and Snyk License Compliance can scan for license violations in CI, but they catch dependencies, not AI-generated source code. Manual review remains essential.

jobs:

license-scan:

runs-on: ubuntu-latest

steps:

- name: Checkout repository

uses: actions/checkout@v6

- name: Scan licenses with FOSSA

uses: fossas/fossa-action@v1

with:

api-key: ${{ secrets.FOSSA_API_KEY }}

run-tests: true.github/workflows/license-check.ymlCode Snippet 4: FOSSA scans dependencies for GPL/AGPL violations and fails the build on policy breach.

AI-assisted commits warrant validation: flagging new dependencies, verifying packages existed before the commit date (Code Snippet 1), and checking maintainer history. For teams using AI agents extensively, subagent architectures can dedicate a validation agent to check every dependency before acceptance.

How Can Organizations Defend Against AI Supply Chain Attacks?

Extend the Toolchain

Existing tools catch CVEs. Tools that catch sustainability risks fill the gap:

- OpenSSF Scorecard (scorecard.dev) scores projects on maintainer activity, security practices, and bus factor

- deps.dev provides dependency graphs with contributor data

- Socket.dev detects supply chain attacks including slopsquatting patterns

Scorecard and Socket integrate directly into AI-augmented CI/CD pipelines via GitHub Actions, flagging risky dependencies before merge.

For a quick CLI check:

# Check a project's health score (0-10)

scorecard --repo=tailwindlabs/tailwindcssCode Snippet 2: OpenSSF Scorecard CLI checks a repository’s security health score (0-10).

To enforce this in CI, integrate Scorecard into a GitHub Actions workflow. The workflow below fails the build if a dependency scores below 7 out of 10, preventing risky dependencies from entering production:

- name: Run OpenSSF Scorecard

uses: ossf/scorecard-action@v2

with:

results_file: scorecard.json

- name: Block on low score

run: |

jq -e '.score >= 7' scorecard.json || exit 1.github/workflows/scorecard.ymlCode Snippet 3: GitHub Actions workflow to enforce minimum dependency health score of 7/10 in CI.

Sponsor Strategically

Tooling catches symptoms. Sponsorship addresses the cause. If a Tier 2 project in a critical path shows stress signals, $500/month establishes a relationship with the maintainer and early warning on sustainability issues.

But individual sponsorship is a stopgap, not a solution. The systemic fix requires platform-level change. One proposed model: AI coding platforms already track which packages they import, so they could redistribute subscription revenue to maintainers based on attributed usage, a “Spotify for open source.” The infrastructure exists; the coordination does not. Until it does, direct sponsorship remains the best lever CTOs have.

The companies that sponsored Log4j before Log4Shell had maintainer relationships when the crisis hit. The ones that did not scrambled with everyone else.

How Will Relicensing Reshape the Stack?

The OSS ecosystem is adapting. Bun’s acquisition by Anthropic shows one path: AI companies absorbing critical infrastructure. More acquisitions are likely.

Experimental protocols like x402 attempt to let AI agents pay for resource access. Still early, but architecturally sound.

The two-tier ecosystem is crystallizing. Foundation-backed projects will weather it better. Indie projects will face consolidation pressure, through acquisition, abandonment, new business models, or relicensing. HashiCorp moved Terraform to BSL in 2023. Redis followed months later. Sentry, MariaDB, Elastic. The pattern is clear. When sponsorships fail and AI erodes documentation revenue, restrictive licenses become the survival strategy. For enterprises, this means dependencies assumed to be permissively licensed may not stay that way.

Before the Next Sprint

- Run OpenSSF Scorecard on the top 10 dependencies

- Check bus factor on Tier 2 critical-path projects

- Add slopsquatting validation to CI (Code Snippet 1)

AI adoption is accelerating. So are the attacks exploiting it. The question is not whether a supply chain has risk, but whether teams that see it first have the advantage.

References

- CrowdStrike, “CVE-2024-3094 and XZ Upstream Supply Chain Attack” (2024) - https://www.crowdstrike.com/en-us/blog/cve-2024-3094-xz-upstream-supply-chain-attack/

- Goodin, Dan, “Overrun with AI slop, cURL scraps bug bounties” (Ars Technica, 2026) - https://arstechnica.com/security/2026/01/overrun-with-ai-slop-curl-scraps-bug-bounties-to-ensure-intact-mental-health/

- Infosecurity Magazine, “Vibe Coding: A Hidden Security Risk of the AI Era” (2025) - https://www.infosecurity-magazine.com/opinions/vibe-coding-security-risk-ai/

- Karpathy, Andrej, “vibe coding” (X, 2025) - https://x.com/karpathy/status/1886192184808149383

- Koren, Miklos et al., “Vibe Coding Kills Open Source” (arXiv, 2026) - https://arxiv.org/abs/2601.15494

- Snyk, “Slopsquatting: New AI Hallucination Threats” (2025) - https://snyk.io/articles/slopsquatting-mitigation-strategies/

- Sonatype, “10th Annual State of the Software Supply Chain” (2024) - https://www.sonatype.com/state-of-the-software-supply-chain/introduction

- Spracklen, Joseph et al., “We Have a Package for You! A Comprehensive Analysis of Package Hallucinations by Code Generating LLMs” (arXiv, 2025) - https://arxiv.org/abs/2406.10279

- Sumner, Jarred, “Bun is joining Anthropic” (2025) - https://bun.sh/blog/bun-joins-anthropic

- Verizon, “2025 Data Breach Investigations Report” - https://www.verizon.com/business/resources/reports/dbir/

- Wathan, Adam, “GitHub comment on Tailwind layoffs” (2026) - https://github.com/tailwindlabs/tailwindcss.com/pull/2388#issuecomment-3717222957